Mary Ann Skweres sniffs out the 411 on UVPhactorys CG stylings for Pete Misers hip-hop video, Scent of a Robot.

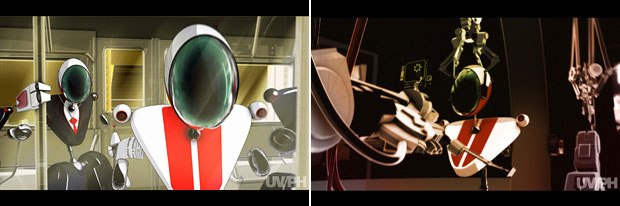

Scent of a Robot is full of fun details like the subway commuters who are robots with their own robot iPods (left). All images © UVPHACTORY.

Pete Misers hip-hop single tells the story of a cubicle-dwelling everyman who discovers that he is a robot. The music video a metaphor of the human condition in the programmed, conditioned world of the new millennium is a clever and humorous visualization, crafted from a combination of live-action footage and 3D animation by the creative team at UVPhactory, the New York-based design and production company co-founded by Scott Sindorf and Damijan Saccio. Creative director Alexandre Moors, who directed the video and did most of the robot research admits, It was an appealing subject to explore.

The team was responsible for all aspects of the video, short of recording the music. They created the concept, designed and executed the animation, shot the live-action footage and completed both the offline and online edits. The project was very much a collaborative venture. According to Sindorf, At the UVPhactory, the creative team wears many hats. This music video presented the opportunity for all of us to collaborate.

Scent of a Robot allowed creative director and director Alexandre Moors (left) and company co-founders Damijan Saccio (center) and Scott Sindorf to fully collaborate.

The team tried to be true to the narrative within the song, but wanted to give it a little twist. Moors likened the main character to that of a rabbit in a laboratory infused with conditioned programming. The video is full of little details that reinforce this theme. One fun example is the revelation that all the commuters on the subway are robots complete with their own robot iPods. The creative vision of the video constantly highlights this tendency of the masses to all act and look alike.

UVPhactory has done a lot of work for the Sci Fi Channel, so they have a lot of experience building robots, but whats special about this robot was that Moors did a lot of design development for it. They tried to create a unique character that is still robotic, but is imbued with hip-hop qualities and has a real personality. Moors explains his design concepts, The robot is a mix of Pete Misers own appearance the way he walks, his silhouette with the Mickey Mouse of the 30s, Steamboat Willie the beginning of animation for most of us. On top of that I liked the oval head that gives an ant feel. I think that was working with the song. Since we all are working robots, I wanted the robot to look like a busy ant. Plus a couple of other influences like Mobius, the French graphic illustrator.

The video was quite elaborate with around 50 shots spanning the 3:30 running time. To tackle the video, the work was broken down into scenes. The 3D aspects had about 30 scenes, but there was a lot of action, so these were broken down into smaller bits. The shots consisted of multiple layers. The team worked from concept sketches. In the computer they created the whole world the environment, the robots, the action and the camera moves. Sindorf shares, We started right away building a lower resolution version of everything so that we immediately had stuff to work with.

Although Moors usually lays out every single shot in a storyboard when he does live action, for this project he didnt use storyboards because he was excited to play with the camera in the 3D world. He didnt want the limits of something drawn on paper. Instead the team made a shot list so that they knew the number of shots that they needed to produce. For each shot, Moors sat down with Jake Slutsky, the senior animator, and moved the camera around. In that way they were able to pick the most interesting shots. Shots that were not live action orientated, but could only exist in an animated 3D world. When I started doing storyboards, they were very live action, 2D orientated because Im used to working with a real camera. Here I can have my camera go from the staircase into a subway train car, go out the window and then make a 360 around because its all in the 3D world, adds Moors.

Although it was an unorthodox way to work, the team decided on this process for a couple of reasons. They had all the time in the world to play around, but mostly the whole project grew together. Everybody was working concurrently. People were designing robots while at the same time the live action was being edited. As a shot became ready, the camera moves would be added. Saccio comments, It was a very organic process. We were allowed, because of the 3D tools, to look at all the options possible and try and create the most dramatic compositions in each shot.

No motion capture was used in the animation, including the robot dance scenes. Everything was animated by hand. Saccio explains, Main character animator, Ryan Bradley, rented break dance instructional videos. He used some of that information. It was really informative to breakdown the moves. We actually captured that and animated on top of some of it. He was able to graph a move from one area and another, move from another area and choreograph them together. This worked better for the concept of the video because motion capture creates very life-like movements, while this video is better served by moves that were playful, like a cartoon or caricature. With traditional keyframing, they could exaggerate, squash and stretch the moves. Like the head spinning 360 degrees which is very difficult for actors to do, jokes Moors.

The live-action footage is generally used as the robots point of view. The team wanted to treat it in a way that would look like a digital environment and facilitate the transitions. To seamlessly incorporate it into the animation, the team created a pixilated robot vision using a simple mosaic filter in After Effects. This broke down the footage into cubes with limited colors. To further unify the aesthetic, they used SOFTIMAGE|XSIs toon shader as a base and built custom shaders to couple the hard-edged toon look with more natural soft shading. We wanted to stay away from cliques and explore new grounds. We wanted to push the limits a bit in terms of what conventional sci-fi robots see and how they act, admits Sindorf.

Besides Sindorf, Saccio and Moors, the remainder of the creative team consisted of senior producer Brian Welsh, live-action director of photography Nick Tramantano, senior designer/3D animator/compositor Jake Slutsky, lead character animator Ryan Bradley, designer/3D animator/compositor Bashir Hamid, compositor/designer Colin Hess, editor Damien Baskette and production assistant Alexis Stein.

UVPhactory composited with Adobe After Effects 6.5, a perfect program for a company that uses freelance compositors. They believe that with After Effects they can achieve flame-like qualities and its really up to the artist, not necessarily the tools that are used. In the last two years After Effects has added 3D features. Now we can work in 3D. Its also added color finish a color correction tool that matches the high-end DaVinci in fancy post-production houses. Its a full software package, comments Moors, who also did some of the compositing for the video. The team used the program extensively to color finesse. In addition, because there is a combination of 3D and 2D compositing, the artists needed to switch back and forth between After Effects and the 3D program SOFTIMAGE|XSI 4.0. They were able to do that on the same box. They also used a third party plug-in that allowed them to connect the 3D world from XSI and bring 3D camera information into After Effects, allowing the team could put 2D graphics into the 3D action. This was all done after the fact in After Effects. Apple Final Cut Pro 4.5, Adobe Photoshop CS and Adobe Illustrator CS were also used in the completion of this project.

Scent of a Robot was a joint venture from Misers label, Ho-Made Media, and independent music marketing company Coup de Grace. Because Miser is an indie artist, he allowed the team to freely pursue their creative vision and was completely behind the project. Saccio concludes, It was a great experience for all of us as a design company to put our full attention and resources into this video. I dont think weve had a project in the office in the last couple of years that everyone has enjoyed working on as much as this. Because of that people work even harder.

Mary Ann Skweres is a filmmaker and freelance writer. She has worked extensively in feature film and documentary post-production with credits as a picture editor and visual effects assistant. She is a member of the Motion Picture Editors Guild.